AI Memory Crunch Sends Sandisk Up 552%, Micron to $840B

Memory stocks surge as AI demand outstrips supply. Sandisk, Micron, and Intel face years of scarcity with projected margins above 80%.

The artificial intelligence buildout has opened a second front in the semiconductor boom, and this one is in memory chips — the least glamorous corner of the silicon supply chain is suddenly the most profitable.

By a wide margin.

SanDisk Corp. has gained 552 percent year-to-date through early May, according to market data compiled by AOL Finance, while Micron Technology has surged roughly 700 percent over the past twelve months to a market capitalisation of $840 billion. Intel Corp., the legacy CPU maker now pivoting hard into foundry and memory-adjacent silicon, is up 192.87 percent over the same stretch. All three have left the broader Philadelphia Semiconductor Index — itself up roughly 40 percent — in the dust.

No precedent exists for this rally in a sector known for brutal boom-bust cycles. What separates this run from prior peaks is a structural supply shortage that executives say will persist for years — not a transient mismatch tied to a single product cycle. Memory, for the first time, is the binding constraint on AI infrastructure, and the companies that manufacture it hold the pricing power that GPU makers commanded in 2023 and 2024.

“Key customers are only getting 50 percent to two-thirds of their requirements due to the supply crunch,” Micron CEO Sanjay Mehrotra told CNBC earlier this month. That mismatch has pushed SanDisk’s projected gross margins above 80 percent through fiscal 2027, according to analyst estimates reviewed by multiple outlets — a margin level more commonly associated with enterprise software platforms than hardware manufacturers.

SanDisk CEO David Goeckeler called the moment “a fundamental inflection point for SanDisk,” framing the shortage as a multi-year demand shift rather than a transient supply hiccup. His company’s NAND flash memory, used in the high-bandwidth memory stacks that sit alongside AI accelerators, has become a bottleneck as hyperscalers race to expand data center capacity.

Micron’s Jeremy Werner, senior vice president for core data center products, pointed to the economics at the rack level. “Breakthrough capacity gives data center operators a critical new lever to improve rack-level total cost of ownership,” he said. That framing puts memory makers, not GPU designers, at the center of the next phase of AI infrastructure spending.

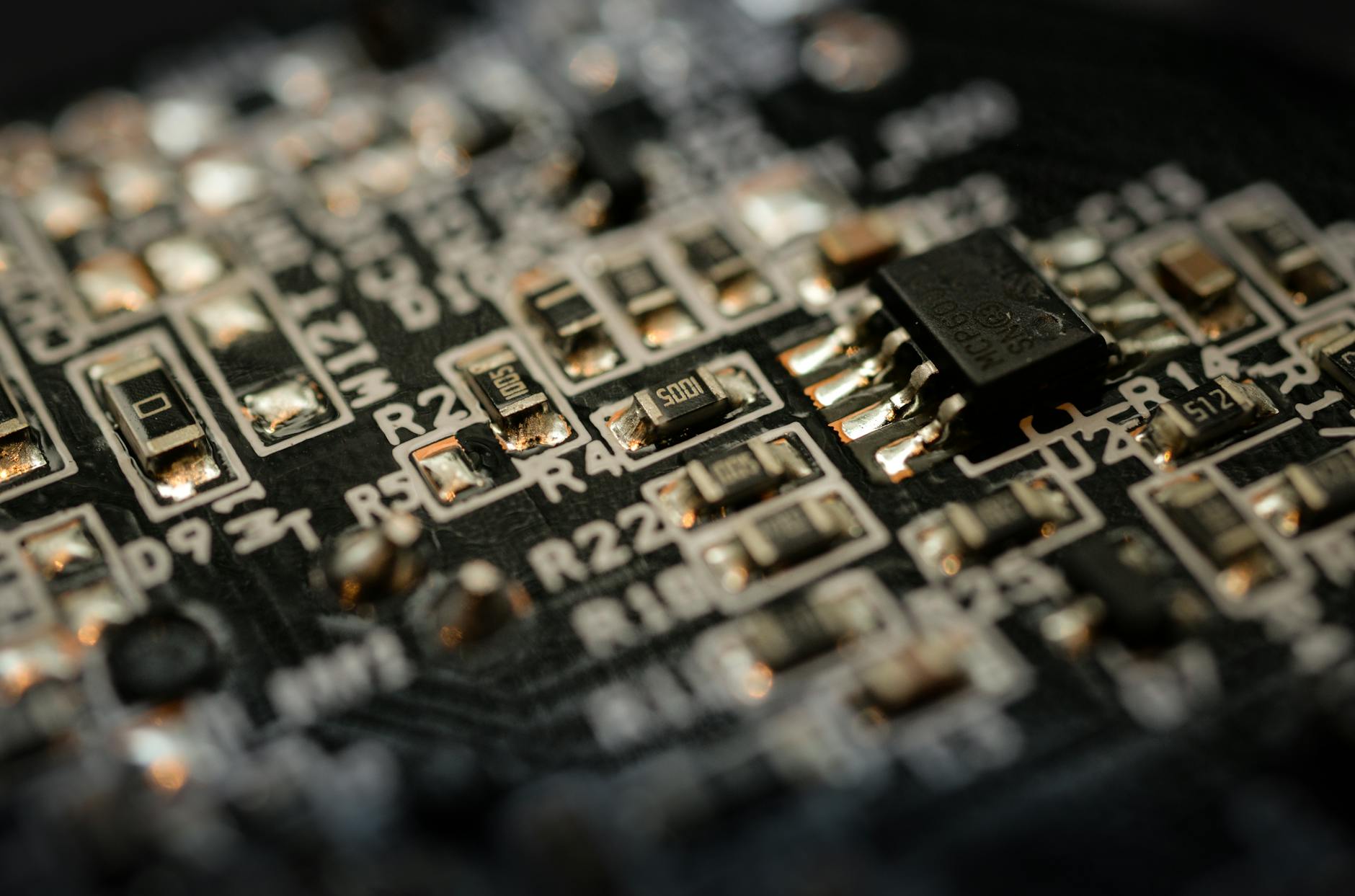

Technical details explain the stock moves. High-bandwidth memory, or HBM, stacks multiple DRAM dies vertically and connects them with microscopic interconnects called through-silicon vias, delivering bandwidths measured in terabytes per second. Every Nvidia H200 and Blackwell GPU ships with HBM attached — and the HBM is now often the part that determines how many systems a hyperscaler can deploy in a given quarter. Production yields on these advanced memory stacks remain stubbornly below those of conventional DRAM, which compounds the supply tightness.

Mechanically, the effect is straightforward. AI training clusters require memory bandwidth that existing supply lines were not built to provide. When Nvidia ships a GPU, the attached high-bandwidth memory is often the binding constraint on system throughput — and the memory makers, not the GPU designers, capture the margin on that constraint.

Margins have broken free of historical norms.

SanDisk’s 80 percent-plus gross margin projection would have been unthinkable three years ago, when memory makers routinely cycled between 30 and 50 percent margins depending on where they sat in the boom-bust curve. Demand is now being driven by capital expenditure budgets at the largest cloud providers — buyers that prioritize availability over price, because a delayed data center expansion costs far more than a premium on memory.

But the memory sector’s history counsels against extrapolation. In 1995, DRAM prices drove a cycle that collapsed within eighteen months. In 2018, the last upcycle, Micron shares lost half their value inside a year as Chinese fabs ramped supply. The difference this time, analysts at Business Insider argue, is that the AI workload profile has permanently shifted the demand curve. Inference — not just training — is memory-hungry, and the volume of inference workloads is still accelerating as enterprises move models into production.

Another structural factor weighs on the supply side. Consolidation has left the memory industry with three major players — Micron, Samsung, and SK Hynix — and SanDisk holding a strong position in NAND flash. None have an incentive to flood the market with supply when margins are running at all-time highs. Discipline, for now, is paying better than volume.

How fast fabrication capacity can expand determines the forward picture. Micron has committed to new fab construction in the United States and India. SanDisk is expanding its joint venture with Kioxia in Japan. But wafer fabrication equipment lead times run eighteen to twenty-four months, meaning meaningful supply relief arrives no sooner than late 2027. Until then, the memory makers sit at the narrowest point in the AI supply chain.

And they are pricing accordingly.

Avery Lin

Markets editor covering US equities, single-name stocks and quarterly earnings. Reports from New York.